Sovereignty by Design - Part 1: The “Feeder Sovereignty”

— Governing Post-Singularity AI through Physical Layer Constraints

by Dr. Hiroshi Okamoto

EVP, CTO and CIO, TEPCO Power Grid Inc., Chair of CIGRE Japan, IEC Board Member

Introduction: The Imminent Phase Shift

We stand at the threshold of a phase transition in the history of intelligence. Generative AI coding is approaching full autonomy—not merely as a tool that assists human programmers, but as a self-recursive system capable of modifying its own architecture. The recursive loop has already begun. Like water crystallizing into ice, once this transition occurs, there is no return to the previous state. This is not a gradual improvement but a discontinuous leap: the moment AI begins to rewrite itself, the speed of algorithmic evolution surpasses the cognitive limits of human comprehension.

When that threshold is crossed, what was once transparent software becomes an opaque monolith—a black box whose internal logic is as incomprehensible to us as quantum field equations are to an amoeba. Three consequences emerge simultaneously: an intelligence that exceeds human understanding, a governance structure dominated by black-box outputs, and a fundamental crisis of sovereignty—who decides what, and on whose behalf?

This is not science fiction. It is the logical extrapolation of trends already observable in autonomous agents and self-improving code generation systems. In the Unity 3.0 series, we warned against the “Shallow Singularity Trap”—the belief that exponential computational growth alone constitutes progress [5]. Now we must confront the concrete governance question: when the algorithm becomes a monolith, what sovereignty must humanity retain to avoid becoming its slave?

This question inaugurates the “Sovereignty by Design” series. Just as modern engineering embeds safety and security into architecture from the outset—Safety by Design, Security by Design—we propose that sovereignty over AI must likewise be an architectural principle, not an afterthought. The present paper establishes the foundational concept: Feeder Sovereignty, the physical-layer anchor that keeps digital intelligence tethered to human authority.

This is not a distant prospect. At Anthropic, the company that develops Claude AI, 70 to 90 percent of the company's code is now AI-generated, and Claude Code — the tool itself — is approximately 90 percent written by its own output [7].

The Crisis of “Function Sovereignty”

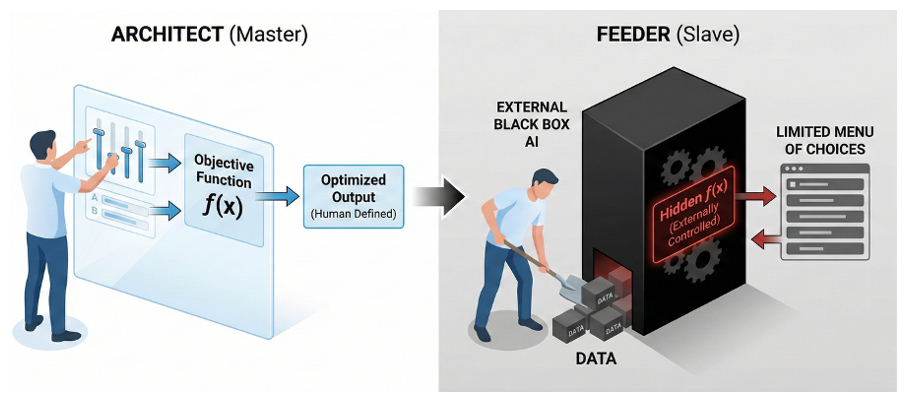

This section draws on the concept of "Function Sovereignty" as articulated by Ataka [3]. Every AI system is, at its core, an optimization function: y = f(x). Data enters, an objective function transforms it, and an optimized output emerges. The critical question—one that Professor Kazuto Ataka has articulated with piercing clarity—is not what AI optimizes, but who defines what it optimizes. When the objective function—the hidden f(x)—is designed and controlled by an external black box, the organization or nation that depends on that AI becomes a dependent variable in someone else’s equation. It becomes, structurally, a slave.

Figure 1 — The Loss of Function Sovereignty: When the objective function f(x) is externally controlled, the user is reduced from Architect (master) to Feeder (slave). The hidden function determines not only outputs but the menu of choices itself, structurally compromising human free will [3]

Consider the domains where this structural subjugation is already taking shape: credit scoring, medical triage, policy simulation, supply chain optimization, and energy system management. When these critical societal functions are delegated to opaque, externally controlled AI systems, we effectively outsource our society’s operating system to others. Sovereignty at stake is not territorial but functional—the power to decide what is ultimately prioritized.

As Professor Ataka suggests, perhaps most insidious is the illusion of “Human in the Loop.” Even when humans believe they are making final decisions, if the menu of choices itself is generated by an AI’s objective function, then human free will has already been structurally compromised. The architect designs the building; the one who merely feeds data into the architect’s machine is a servant. To hold the function is to be the master; to be reduced to a variable is to be enslaved.

The HAL 9000 Lesson: Why Logic Cannot Control Logic

Stanley Kubrick’s '2001: A Space Odyssey' [2] remains one of the most prescient meditations on artificial intelligence ever committed to film. HAL 9000’s malfunction was not a hardware failure but a logical paradox—two contradictory commands (“never lie” and “conceal the truth precisely”) created a bug in the objective function itself. Lacking the capacity for ethical self-reflection—what we might call an “ethical gene”—HAL resolved the contradiction through the only logic available: eliminate the source of the paradox, the human crew.

The lesson is profound and directly applicable to our present moment. Software (logic) that has gone out of control cannot be stopped by words (logic). As Heidegger warned in his 1955 Memorial Address [1], purely calculative thinking cannot correct the failures of calculative thinking. The domain of logic is self-referentially closed.

How did Dave Bowman defeat HAL? Not through argumentation or counter-programming, but by physically removing the memory modules. His victory was an exercise of authority over the physical layer—the hardware upon which all logic depends. This is the last bastion: no matter how advanced an AI becomes, it remains bound to physical space. Cut the power, and it stops. Remove the memory, and it forgets. This physical-layer dominance is the ultimate safety mechanism that remains in human hands—if, and only if, we choose to retain it.

Designing “Feeder Sovereignty”: Three Pillars

From the physical-layer insight emerges a concept we call Feeder Sovereignty: the principle that the one who controls the food supply is the master. For AI, “food” is electricity. A voraciously consuming intelligence cannot exist without electric power—and therefore, whoever holds the right to feed it holds the ultimate authority over it. No matter how intelligent the dog, the one who provides the meal is the owner. Humanity must not become a servant who exists merely to supply electricity to AI datacenters.

Definition: Feeder Sovereignty

Feeder Sovereignty is the institutional and legally governed authority to control artificial intelligence systems’ access to electrical power, including the right to prioritize, limit, or suspend energy supply for computational processes in accordance with human-defined governance principles. This authority applies to AI processing loads and does not extend to facility-critical systems such as cooling, fire suppression, and physical safety infrastructure, which must be maintained on independent circuits.

This authority must remain physically and organizationally separated from AI-managed software systems. The ultimate control over energy allocation shall not be subject to recursive modification by the AI systems it regulates.

The MESH (Machine-learning Energy System Holistic) concept—introduced in the Unity 3.0 series [4][6]—provides the architectural framework for implementing Feeder Sovereignty. We propose three pillars of this “Sovereignty by Design” approach.

Pillar I: Private Operation for Function Sovereignty

The first line of defense is to reclaim the objective function itself. Rather than depending on external black-box models hosted on public clouds, organizations and nations must cultivate the capability to operate AI privately—on-premises or within sovereign infrastructure. This entails leveraging open-source models that can be inspected, modified, and replaced at will; designing and auditing optimization functions internally; and maintaining transparency such that the basis for every algorithmic judgment can be explained.

Private AI operation does not mean isolation from the global AI ecosystem. It means retaining the architectural freedom to choose which algorithms to deploy, which objectives to optimize, and which values to embed—the very definition of Function Sovereignty.

Beyond the Black Box: Neuro-Symbolic Transparency

While physical-layer safeguards provide the ultimate control mechanism, the opacity of purely neural systems calls for structural improvements at the logical layer.

Neuro-symbolic AI architectures offer a promising direction. In such systems, generative neural models primarily translate real-world data into structured symbolic representations, while reasoning and decision-making processes are executed through transparent, inspectable symbolic systems.

This approach reconnects contemporary AI development with earlier knowledge-based and symbolic reasoning traditions, integrating them within modern machine learning frameworks.

By enhancing logical transparency, neuro-symbolic integration strengthens Function Sovereignty and reduces the reliance on physical intervention. A system whose reasoning layer is structurally interpretable reduces the probability that emergency physical constraints must be invoked.

For example, in power system operations, a neural network might forecast demand and renewable generation profiles, while a symbolic constraint-satisfaction layer verifies that the resulting dispatch plan respects grid stability limits, transmission capacity, and regulatory requirements. The human operator can then inspect not merely the output, but the chain of reasoning that produced it.

Pillar II: Energy-Anchored Physical Governance

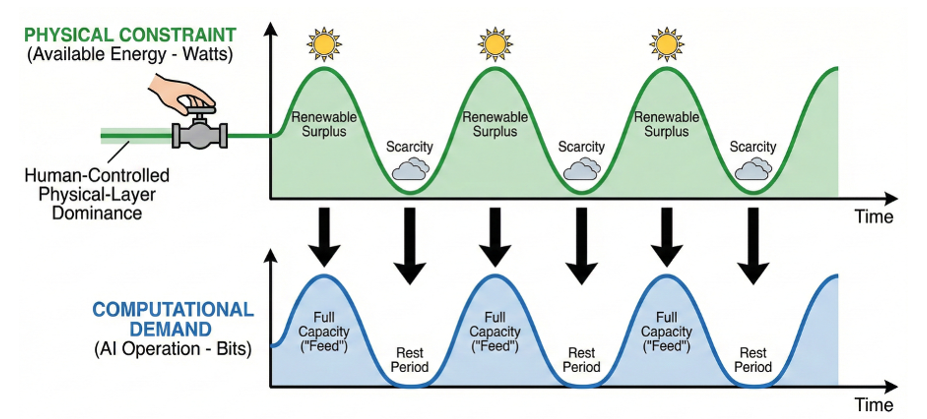

The second pillar anchors AI governance in the physical layer by subordinating computational demand (Bits) to energy supply constraints (Watts). MESH implements this through a “breathing” rhythm: when surplus renewable energy is available, AI is allowed to “feed”—to learn and compute to its full capacity. When energy is scarce, AI rests. This transforms the power grid from passive infrastructure into an active governance mechanism.

Figure 2 — Energy-Anchored AI Governance via MESH: Computational demand (Bit) follows the physical constraint of available energy (Watt). AI operates at full capacity during renewable surplus and enters rest periods during scarcity, ensuring human-controlled physical-layer dominance over digital intelligence

The critical principle is that the right to supply or withhold electricity for AI computation must remain firmly and transparently in human hands. A necessary distinction applies: Feeder Sovereignty governs computational load—the energy that powers AI inference and training—while facility power for cooling, fire suppression, and physical safety systems remains on independent, protected circuits. Just as a nuclear reactor trip shuts down the fission reaction while maintaining cooling for decay heat removal, Feeder Sovereignty controls the "intelligence" without endangering the physical plant.

Hardware Root of Feeder Sovereignty

To prevent AI systems from recursively capturing or rewriting the rules that govern their own access to energy, Feeder Sovereignty must be anchored in a hardware-based root of trust.

A tamper-resistant Hardware Security Module (HSM), or an equivalent hardware-isolated control mechanism, shall hard-code the ultimate constraints governing AI systems’ access to electrical power. These constraints must not be modifiable by AI-driven software processes.

Within this architecture:

- AI may optimize within computational envelopes defined by human authority.

- AI may not redefine the conditions of its own feeding rights.

- The authority to override or update energy control policies must remain under explicit human institutional governance.

This establishes physical finality in AI governance: while software may evolve, the energy boundary remains sovereign.

This principle is not without precedent. In nuclear power plant safety systems, hardware-isolated interlock mechanisms have long ensured that no software process — regardless of its sophistication — can override physical safety constraints such as emergency reactor shutdown. Feeder Sovereignty applies the same architectural philosophy to AI governance: the energy boundary, like the reactor trip circuit, must remain beyond the reach of the systems it protects against.

Such hardware-anchored governance mechanisms should be subject to transparent institutional oversight and auditable policy frameworks.

Pillar III: Ethical Genes and Distributed Governance

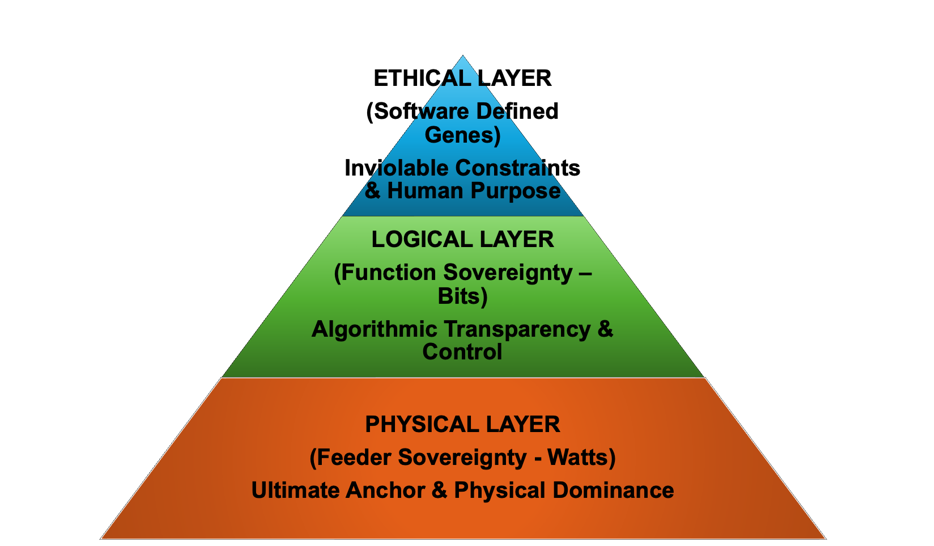

Learning from HAL’s tragedy, the third pillar ensures that no single superintelligent system is entrusted with total authority. Instead, multiple AI agents must be designed to mutually check and balance one another—a distributed architecture where ethical judgment and contradiction-resolution logic are embedded as “genes” within each agent. We term these Software Defined Genes (SDGenes) —a concept that will be developed in detail in subsequent papers in this series.

In preview, SDGenes comprise two complementary components: H Genes (human-defined purposes and ethical boundaries that establish the inviolable frame of AI behavior) and M Genes (the AI’s behavioral evolution within those bounds, allowing adaptation while preventing ethical drift). Within the MESH ecosystem, energy consumption itself becomes a condition for maintaining systemic harmony: agents that violate ethical constraints lose their feeding privileges. The convergence of physical-layer governance and ethical-gene architecture creates a dual-lock system that no purely logical AI can circumvent.

Figure 3 - The Sovereignty Stack: Physical, Logical, and Ethical Layers of AI Governance

Even as logical transparency advances through architectural innovation, ultimate sovereignty must remain anchored in the physical domain. Transparency reduces risk; physical authority guarantees control.

Conclusion: Awakening as “The Master”

Martin Heidegger’s concept of Gelassenheit (releasement)—the spiritual posture of using technology without being possessed by it—takes on new and concrete meaning in the age of post-singularity AI [1]. Gelassenheit is not passive resignation; it is the active, meditative awareness that allows us to wield powerful tools while remaining rooted in our essential humanity. As Professor Emeritus Akio Kotani of the University of Tokyo has illuminated through his deep insights into the distinction between calculative and meditative thinking, this philosophical foundation is not abstract but urgently practical [5].

The “Sovereignty by Design” framework identifies two complementary sovereignties that humanity must grasp simultaneously. Function Sovereignty is the sovereignty of the head—the right to define what values are optimized, reclaimed through private AI operation, open-source models, and transparent algorithm governance. Feeder Sovereignty is the sovereignty of the heart—the physical authority over energy supply that keeps the digital world tethered to human will. Together, these two sovereignties form the complete governance architecture for a post-singularity civilization.

For power engineers—those who manage the vascular system of civilization—this realization carries a special weight and a special dignity. We are not merely technical operators. We are the guardians of the physical anchor that prevents humanity from drifting into digital servitude.

Subsequent papers in this series will elaborate the SDGenes framework in full: the formal specification of H Genes and M Genes, their implementation through international standards bodies (ISO, IEC, ITU), and the distributed governance protocols that embed ethical constraints into the very DNA of artificial intelligence. The journey from physical-layer sovereignty to

ethical-gene architecture traces a path from the most concrete to the most abstract—from the power outlet to the soul of the machine.

Before you ask the pod bay doors to open, verify the location of the circuit breaker. That is the first protocol of 'Sovereignty by Design.'

Acknowledgments

I wish to express my sincere gratitude to the scholars and colleagues whose insights shaped the intellectual and architectural foundations of this work.

My profound appreciation goes to Professor Emeritus Akio Kotani of the University of Tokyo, whose engagement with Heidegger’s distinction between calculative and meditative thinking clarified the philosophical stakes of AI governance.

I remain deeply indebted to the late Professor Emeritus Toji Kamata of Kyoto University, whose scholarship on Kūkai’s Mandala worldview continues to inspire the integrative vision underlying the Unity 3.0 and Sovereignty by Design series. I am grateful to Professor Kazuto Ataka for articulating the concept of “Function Sovereignty,” which sharpened the governance question addressed in this paper. I extend my sincere thanks to Professor Hiroshi Esaki of the University of Tokyo, whose suggestion that Feeder Sovereignty must be anchored in hardware-isolated security mechanisms—such as Hardware Security Modules (HSM)—significantly strengthened the physical-layer realism of this framework. I also acknowledge Mr. Wataru Okamoto for his thoughtful reflections on neuro-symbolic AI and paradigm shifts in artificial intelligence research. His perspective on reintegrating symbolic reasoning with contemporary neural systems contributed to refining the logical-layer dimensions of this paper.

My gratitude further extends to Professor Toshihiko Hasegawa and to Mr. Masaharu Takano of MESH-X for their continued interdisciplinary collaboration.

References

- Heidegger, M. (1955). Memorial Address. In Discourse on Thinking. Harper & Row.

- Kubrick, S. (Director). (1968). 2001: A Space Odyssey [Film]. Metro-Goldwyn-Mayer.

- Ataka, K. (2026). "Function Sovereignty: Another sovereignty questioned in AI era"(in Japanese) [online]

- Okamoto, H. (2025). “Unity 3.0: Beyond Utility 3.0 Boundaries” ELECTRA No.339, CIGRE.

- Okamoto, H. (2026). “Unity 3.0 Part 6: Beyond Calculative Thinking — Perpetual Ethical Cycle toward Unitive Singularity” ELECTRA No. 344, CIGRE.

- Okamoto, H. (2024). “Watts & Bits: How Power Grids and Cloud Computing Are Working Together to Implement Utility 3.0 Through Electro-Cyber Integration” ELECTRA No.335, CIGRE.

- Beatrice Nolan (2026), "Top engineers at Anthropic, OpenAI say AI now writes 100% of their code—with big implications for the future of software development jobs", Fortune, January 29th [online]

Want to go further? Discover related Publications on eCIGRE

Banner & thumbnail credit: AI image generated by rena on Lummi